Engagement Intelligence Lives with Your Best People

You can build a conversational AI tool in an afternoon. Pick a platform, upload your benefits documents and FAQs and care protocols, write some instructions about tone. A member asks a question, the tool answers from your files.

It works. It's accurate. And it sounds exactly like every other tool anyone has ever built.

This is where most organizations stop designing. The tool works, the answers are correct, so the next move is scale. Integrate it into the product, personalize it, add more documents. The assumption is that the gap between "accurate" and "good" is an engineering problem that will close with more data and better infrastructure.

But that's not what's missing.

Ask your best navigator what they say when a member calls confused about a prior authorization. Not what the document says, but what they say. How they read the frustration in the question. How they adjust when someone is calling for the third time versus the first, and how they know when the real question isn't the one being asked.

Ask your field team how they explain a benefit differently depending on whether someone is managing a new diagnosis or a chronic condition. Not different information, but different framing, different entry points, different weight on different words.

Ask your operations lead which edge cases actually matter, the ones that show up in 2% of calls but drive 40% of the dissatisfaction.

That knowledge about the human interaction is real and specific and it changes outcomes. And none of it is in any document you uploaded.

We call it engagement intelligence. It's the distance between a correct answer and the answer your best person would give.

Most of the conversation right now is about personalization, and personalization matters. Knowing who someone is, what plan they're on, what they've already tried. But personalization is often treated as an engineering problem: connect the data, populate the fields, add the name.

The harder thing is relevance. When someone asks "Do I need to see my doctor before I go back to work?" three days after a discharge, they're not asking a clinical question. They're asking about Monday. Whether they can afford another day off. Whether this is going to be simple or complicated. Your best navigator hears all of that in six words. A personalized tool that knows their name and plan ID still misses it entirely.

Relevance isn't a feature you add. It's a judgment that lives in people, in the accumulated pattern recognition of someone who's had ten thousand versions of the same conversation and learned what actually works. What to say first, what to leave out, when the real question isn't the one being asked. If that judgment isn't in the tool, the tool sounds like a well-informed stranger. The kind of answer you'd get from someone who read everything but experienced nothing.

So how does the judgment get in?

The instinct is to document it. If the knowledge is missing, write it down. But engagement intelligence resists documentation in the traditional sense because it's contextual and tonal and it lives in the way someone says something, not just what they say.

Hilary Gridley has been writing about the collapse between writing and building. She turns her essays into Custom GPT system prompts, and the framework becomes the tool. The same thing is happening here. When your care manager sits down and writes out how she actually explains post-discharge follow-up, not the clinical protocol but the way she'd say it to someone who's worried about missing work, that writing is the product. The knowledge file isn't documentation. It's the intelligence the tool runs on.

Words can just do things now.

Your tool today

But you can't write what you don't know is missing, which is where the tool itself becomes the diagnostic instrument.

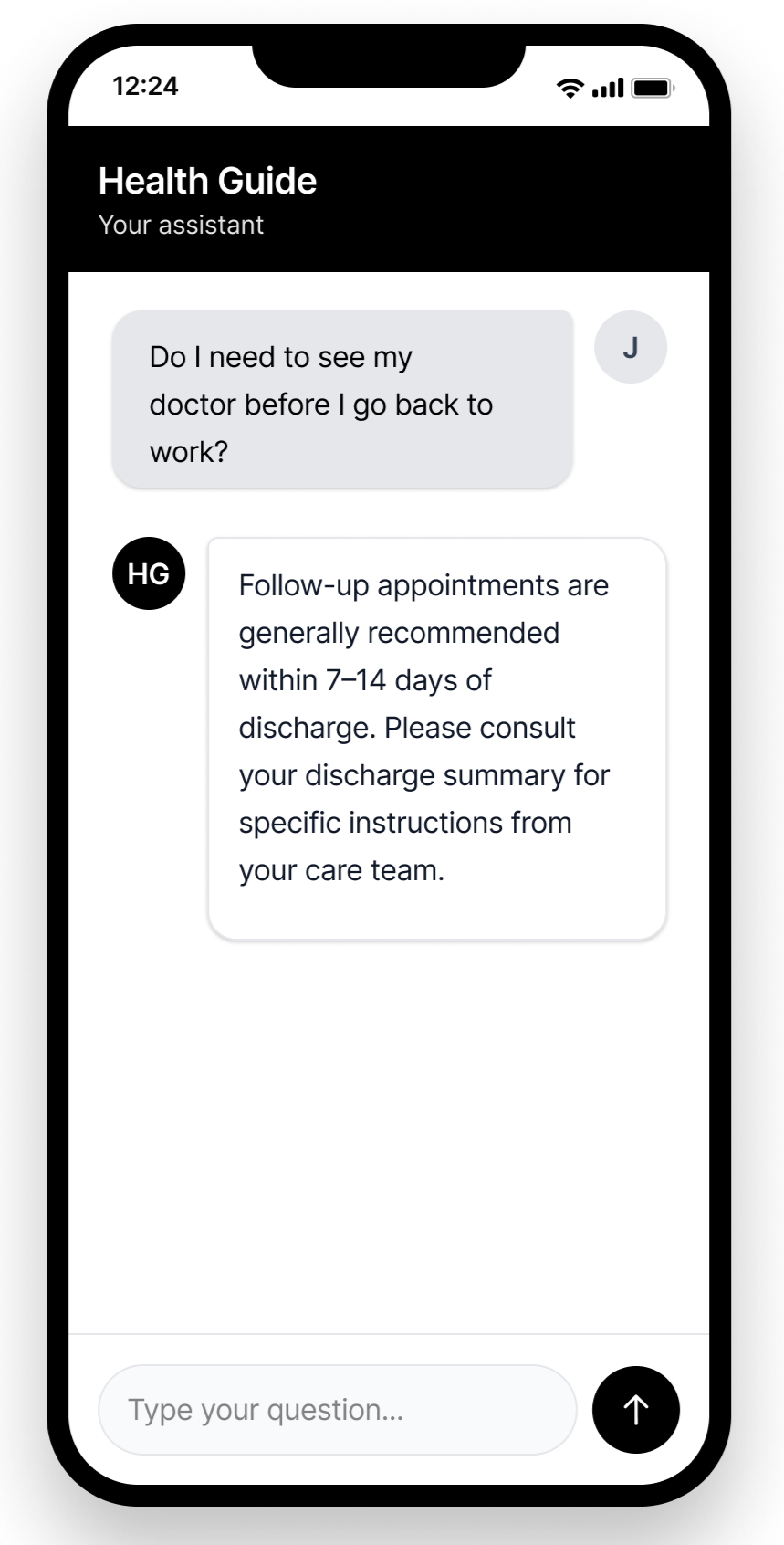

A member gets home after a discharge and opens a chat: "Do I need to see my doctor before I go back to work?"

The first version of the tool gives a correct, useless answer (Your tool today).

The member wanted to know if they need a visit, how to get one, and whether they're cleared to go back Monday. The answer is accurate and generic.

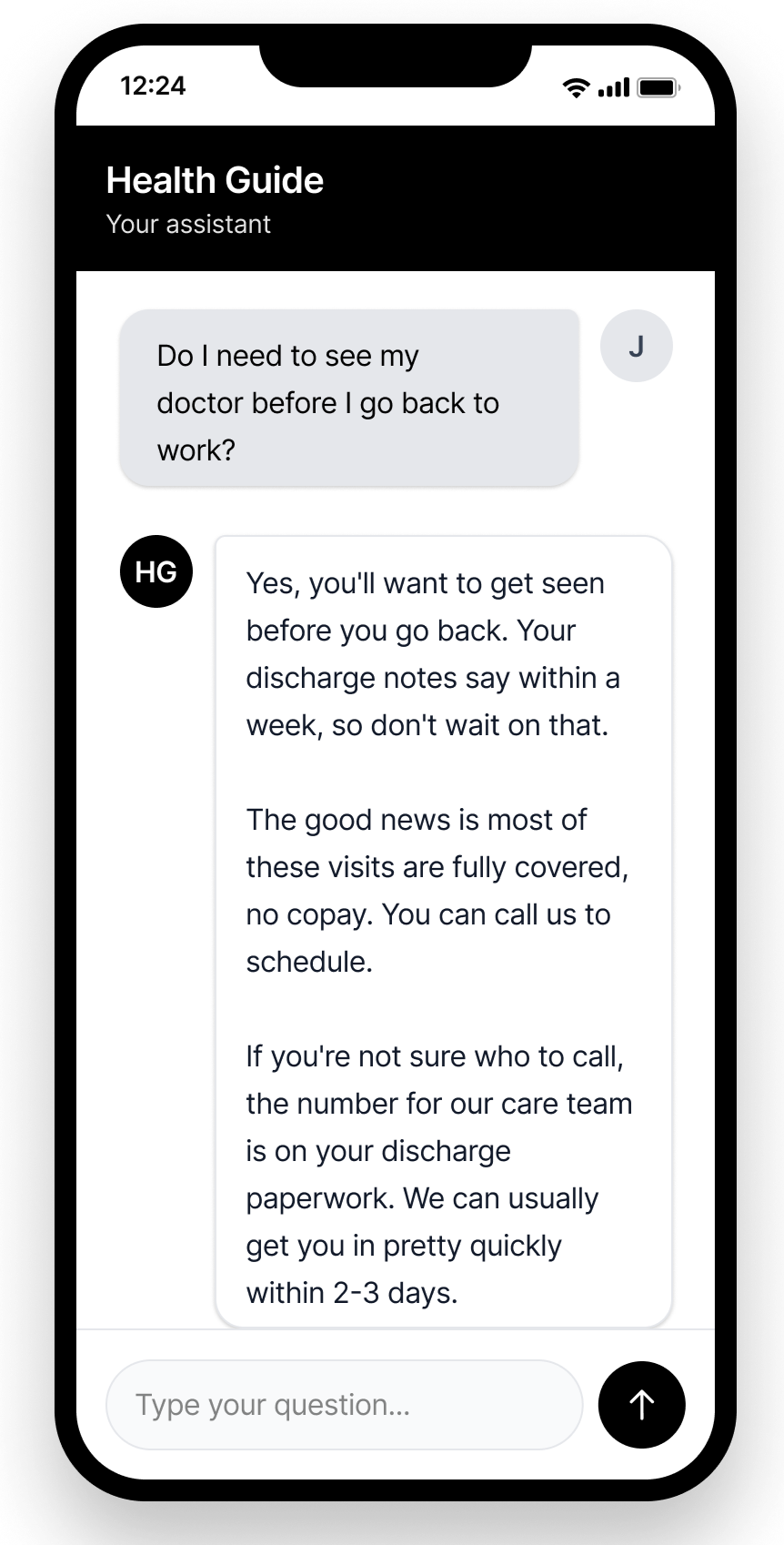

The failure is the point. You put the sketch in front of your team and they start catching things. Someone says "oh, people ask that all the time, I just tell them..." and now that knowledge is in a file. Someone else notices the tool hedges on a question the team answers confidently every day. The operations lead flags an edge case the documents never covered because it never needed to be written down. The person who handles it has been there nine years.

Each of those moments is engagement intelligence becoming visible. The tool is just the instrument that reveals where it lives and where it doesn't.

Then you put it in front of real users. A branded experience, a QR code at a worksite, a link in a follow-up message. Real users ask questions your team never anticipated. They phrase things differently than expected. They ask about family members and situations the documents don't cover. Every gap is a piece of engagement intelligence that was invisible until someone needed it.

Behavioral patterns start to form, and not the ones you'd expect. Not satisfaction scores or usage counts, but the revealing patterns. What people ask after the first answer, because the follow-up question tells you whether the first answer actually landed or just sounded complete. Where the tool hedges or redirects to "contact your care team," because each hedge marks a place where the knowledge architecture is thin. Which entry points people actually use versus the ones you assumed they would.

Shaped by your best person

Julie Zhuo described the same dynamic in data analytics recently. AI can generate SQL, produce dashboards, and write explanations that sound confident, but more analysis doesn't mean better decisions. An executive asks why retention dropped and AI produces five plausible explanations, each with supporting charts. A strong data curator sees immediately that one is wrong, three are technically true but misleading, and only one actually changes strategy. The bottleneck moved from generating the analysis to knowing what's true.

The same thing happens here. The tool generates a flood of behavioral evidence, and the question isn't whether you have data but whether you have someone who can read it. Someone who can tell the difference between a phrasing variation and a genuine knowledge gap, or between a channel metric that reflects real engagement and one that just reflects convenience. That kind of reading is engagement intelligence at the organizational level.

Over time, the knowledge files get rewritten, restructured around how people actually ask rather than how the organization thinks about the information. New files get created for questions the tool had nothing for. The engagement intelligence gets deeper with each iteration.

Same question. Same tool.

With this second example, seeded by the intelligence from your best person, the conversation now carries intelligence it didn't have before (Shaped by your best person). It answers the actual question, addresses cost, gives a next step. The difference isn't engineering. It's what's in the knowledge files, and what's in the knowledge files is what your best people knew all along, made visible by the tool's failures and rebuilt by their judgment.

Built from engagement intelligence

The question most organizations are asking is how do we personalize this? How do we connect member data, tailor responses, make the tool know who it's talking to? Those are real problems, but they're the next problems. The first one, the one that determines whether any of that personalization actually matters, is whether the tool carries the judgment that makes an answer relevant rather than just accurate. Whether the intelligence that lives in your best people has made it into the system.

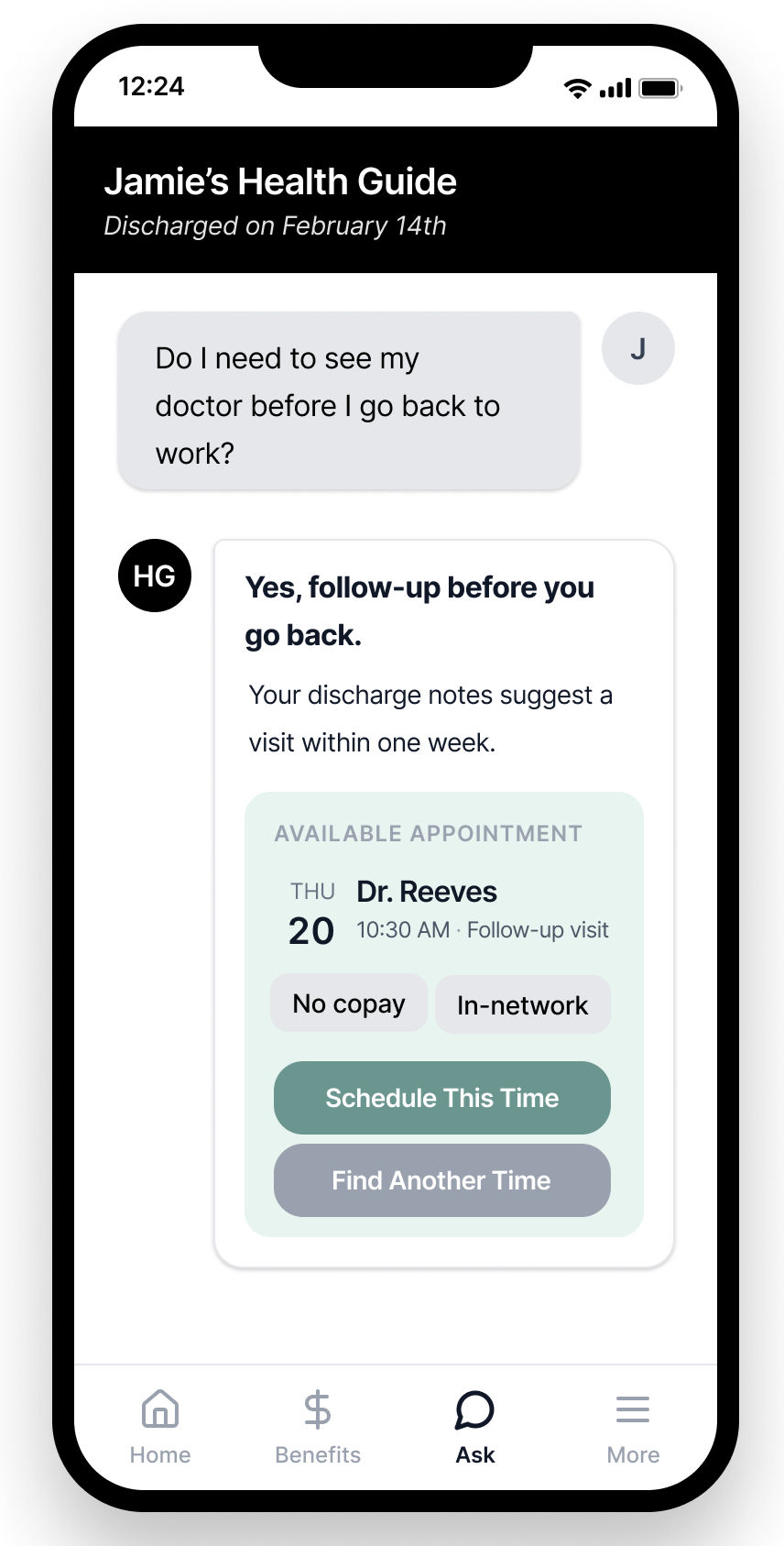

When the tool finally does know who someone is, relevance and personalization work together (Built from engagement intelligence). The answer isn't just accurate or even well-framed. It becomes an action. But that only works because the conversation design was already carrying the right intelligence. Personalization without relevance just means delivering a generic answer faster, with someone's name on it.

The platforms will keep getting better and the infrastructure will keep getting cheaper. What won't get easier is the work underneath, the work of understanding what your tool should say, to whom, in what tone, and why. Ask your tool the question your members ask most. Then ask your best person the same question. The distance between those two answers is the work.